If you haven’t heard already, Apple has published an open letter explaining that the technology company will not be giving the U.S. Government access to their customer’s encrypted data. The government wants that data to use in law enforcement and anti-terrorism.

“When the FBI has requested data that’s in our possession, we have provided it. Apple complies with valid subpoenas and search warrants, as we have in the San Bernardino case. We have also made Apple engineers available to advise the FBI, and we’ve offered our best ideas on a number of investigative options at their disposal.

We have great respect for the professionals at the FBI, and we believe their intentions are good. Up to this point, we have done everything that is both within our power and within the law to help them. But now the U.S. government has asked us for something we simply do not have, and something we consider too dangerous to create. They have asked us to build a backdoor to the iPhone.

Specifically, the FBI wants us to make a new version of the iPhone operating system, circumventing several important security features, and install it on an iPhone recovered during the investigation. In the wrong hands, this software — which does not exist today — would have the potential to unlock any iPhone in someone’s physical possession.

The FBI may use different words to describe this tool, but make no mistake: Building a version of iOS that bypasses security in this way would undeniably create a backdoor. And while the government may argue that its use would be limited to this case, there is no way to guarantee such control.”

To good people who are concerned about making sure that the government has the tools it needs to convict criminals and stop terrorists, this looks like a reasonable request, and that Apple is making us less safe.

Let me explain why I think Apple is right and that the government is wrong.

I have worked in the encryption industry. The backdoor that the government is asking for is more complicated than people realize. Without understanding the technology involved, and how modern encryption works, it can be hard to understand what is going on.

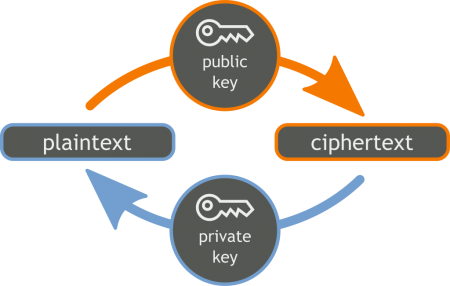

Modern encryption typically involves what is known as Public Key Encryption. It uses two mathematically generated “keys”– one that can be publicly given to anyone, and the private one that must be kept secret. The encryption is virtually impossible to break. The only way to decrypt it is by using the private key.

Right now, those keys are kept on the device and used to encrypt the data on that device. So for Apple to be able to give access to the government at all, they would have to keep a copy of ALL private keys used on all of their customer’s devices. That is what phone and device manufactures did previously. Storing private keys for huge numbers of people requires very carefully built systems meant specifically for doing that, and comes with built in risks. It also makes them a bigger target for hackers and techno-terrorists. It makes customers more secure to store the private keys on their own device, that is why they do it that way now. But it also means that if the government asks for access to the encrypted data on the device, the manufacturer no longer can give them access because they don’t have the private key or would have to undermine the entire encryption process to make the private keys accessible.

The more important fact is that even if they were to go ahead and keep a copy of the private keys, and give government access to them when legally required, or if they were make it possible to easily retrieve the private keys from the device, it would not really accomplish what the government wants.

Encryption technology is easy to write and widely available in open source projects. Any computer science student can, with a little research, create a program that will encrypt data in a way that makes it basically impossible to crack. Criminals can easily install or write custom software to add additional encryption that neither Apple nor the government can crack. Most people who are serious about keeping their data private are already doing this.

So making Apple keep the private keys, or make the private keys on individual devices easily accessible only really endangers the privacy of law abiding citizens while doing nothing to stop the criminals or terrorists, who will simply encrypt their data further using these widely available options.

I highly recommend this article from the American Enterprise Institute:

Encryption: Conflating two technical issues in one policy debate

For a more detailed explanation of Public-Key Cryptography, see Wikipedia: https://en.m.wikipedia.org/wiki/Public-key_cryptography

UPDATE: Looks like in this specific case the FBI isn’t asking for Apple to decrypt the data. It is asking for them to modify the device firmware to remove or circumvent the feature that erases the data on the iPhone if the passcode is entered incorrectly too many times. This would allow them to try different combinations of numbers indefinitely until they figured out the passcode and accessed the iPhone data directly without needing to decrypt.

See: http://readwrite.com/2016/02/17/apple-wont-build-backdoor

This does not change the fact that people can add their own encryption or use readily available software to encrypt data that will still be unreadable by law enforcement, so that in general circumventing these security features doesn’t actually accomplish what the government wants, because even if they can brute-force the iPhone passcode, the owner can still encrypt files.

Continue reading at the original source →